Starter App

This section details walking through the generation of a starter application for usage with IBM Event Streams, as documented in the official product documentation.

The Starter application is an excellent way to demonstrate sending and consuming messages.

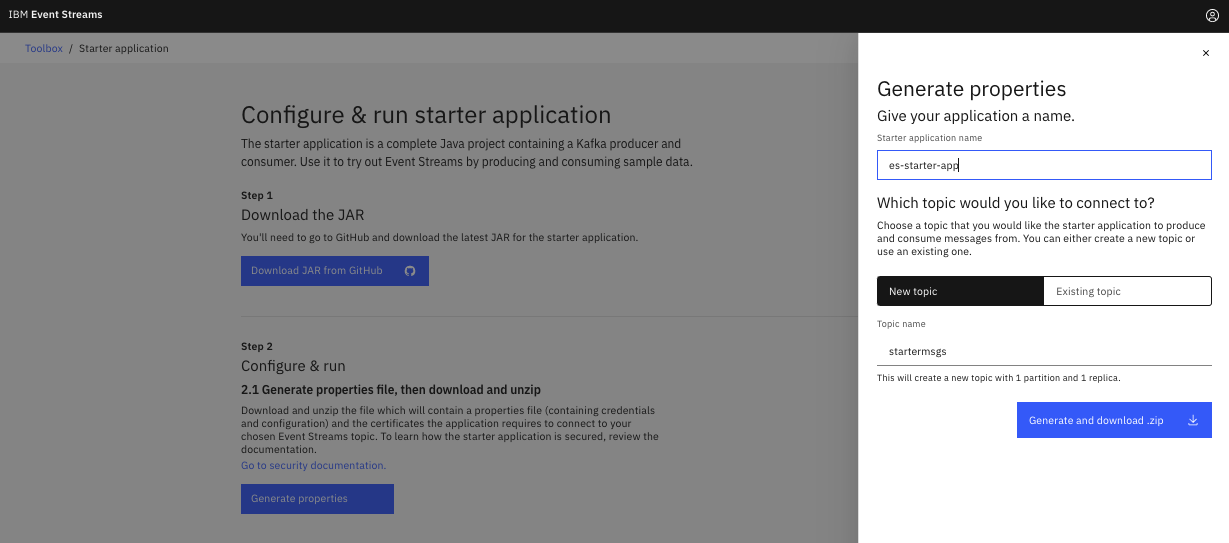

Prepare the starter app configuration¶

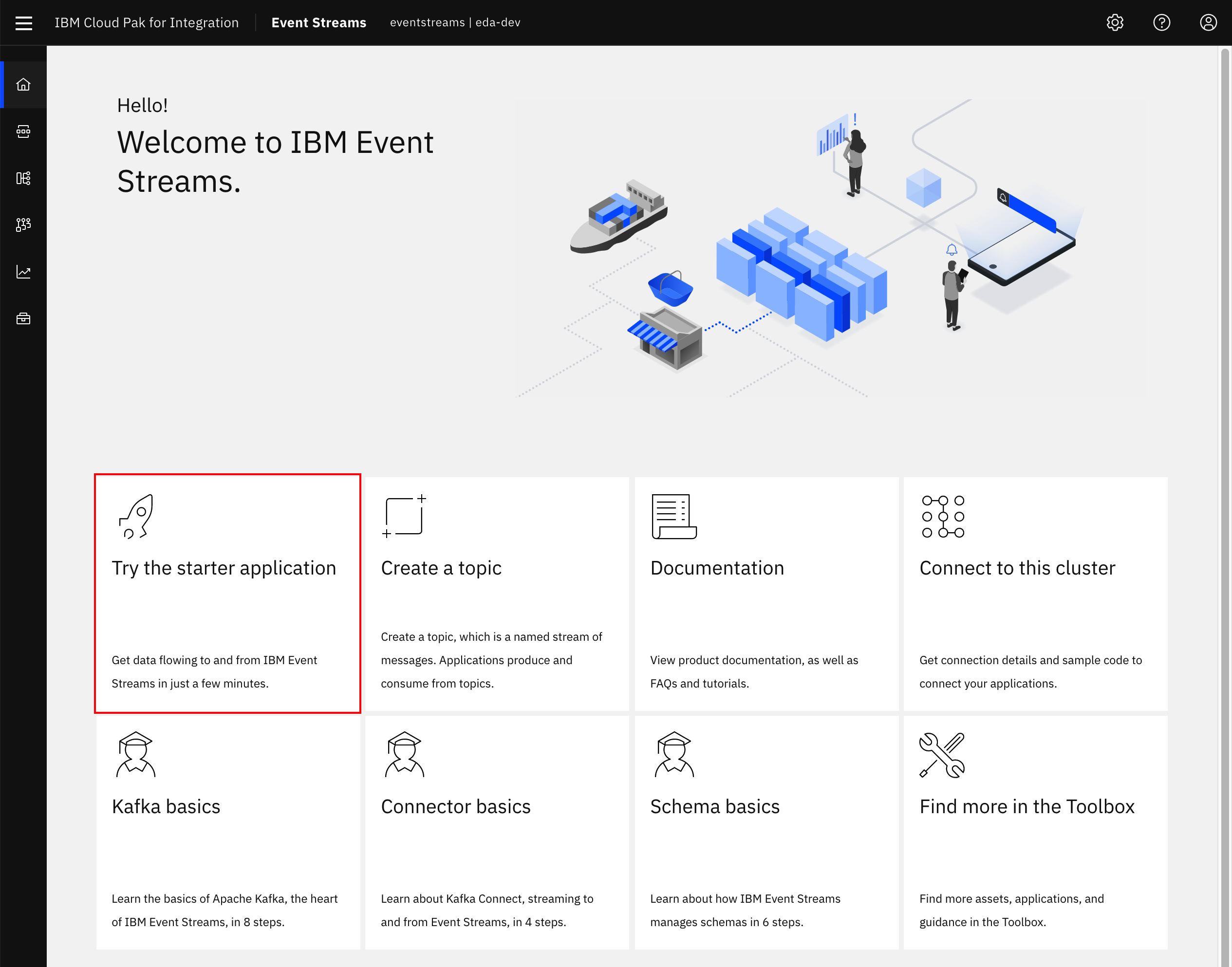

- Log into the IBM Event Streams Dashboard, and from the home page, click the Try the starter application button from the Getting Started page

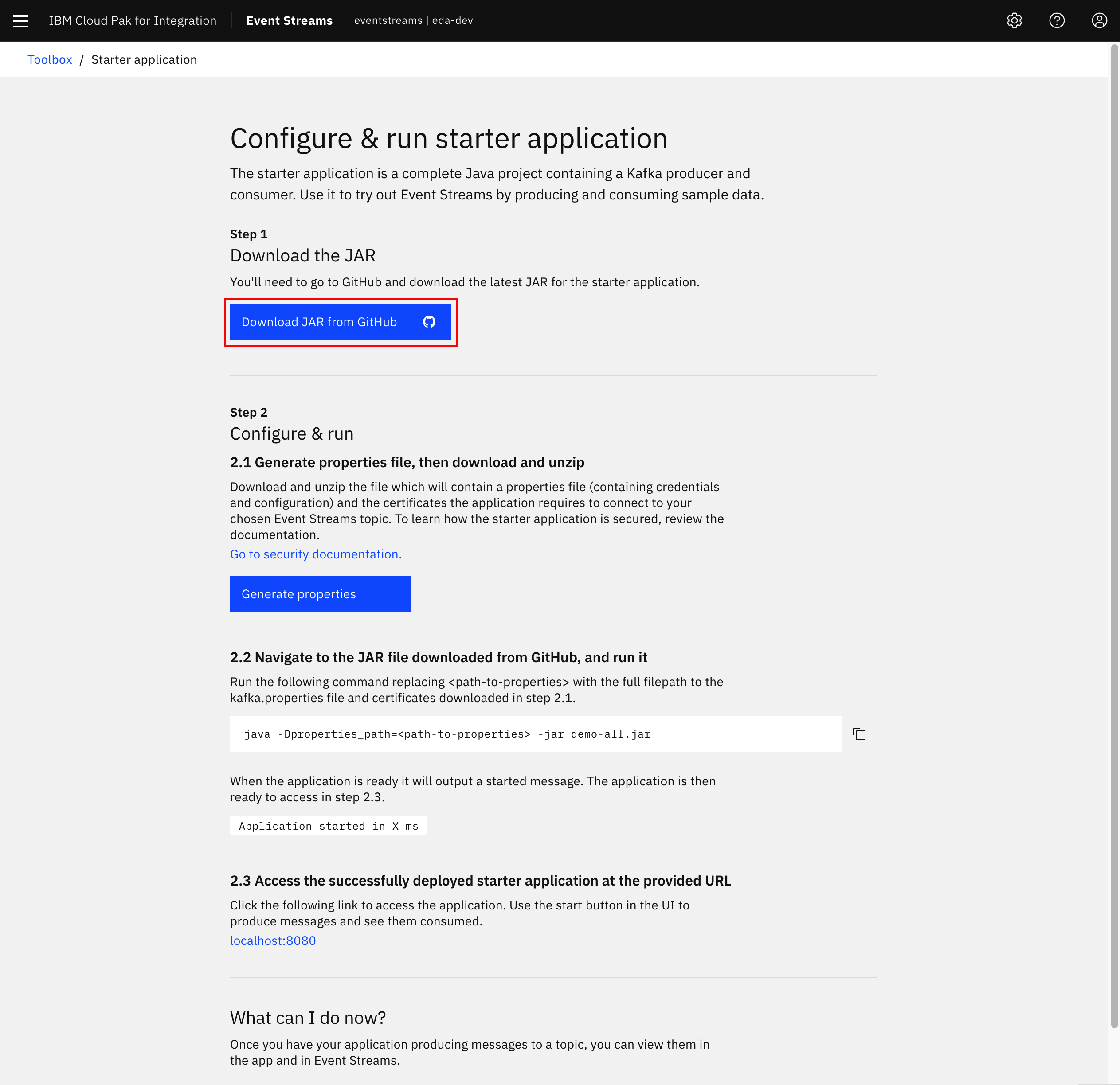

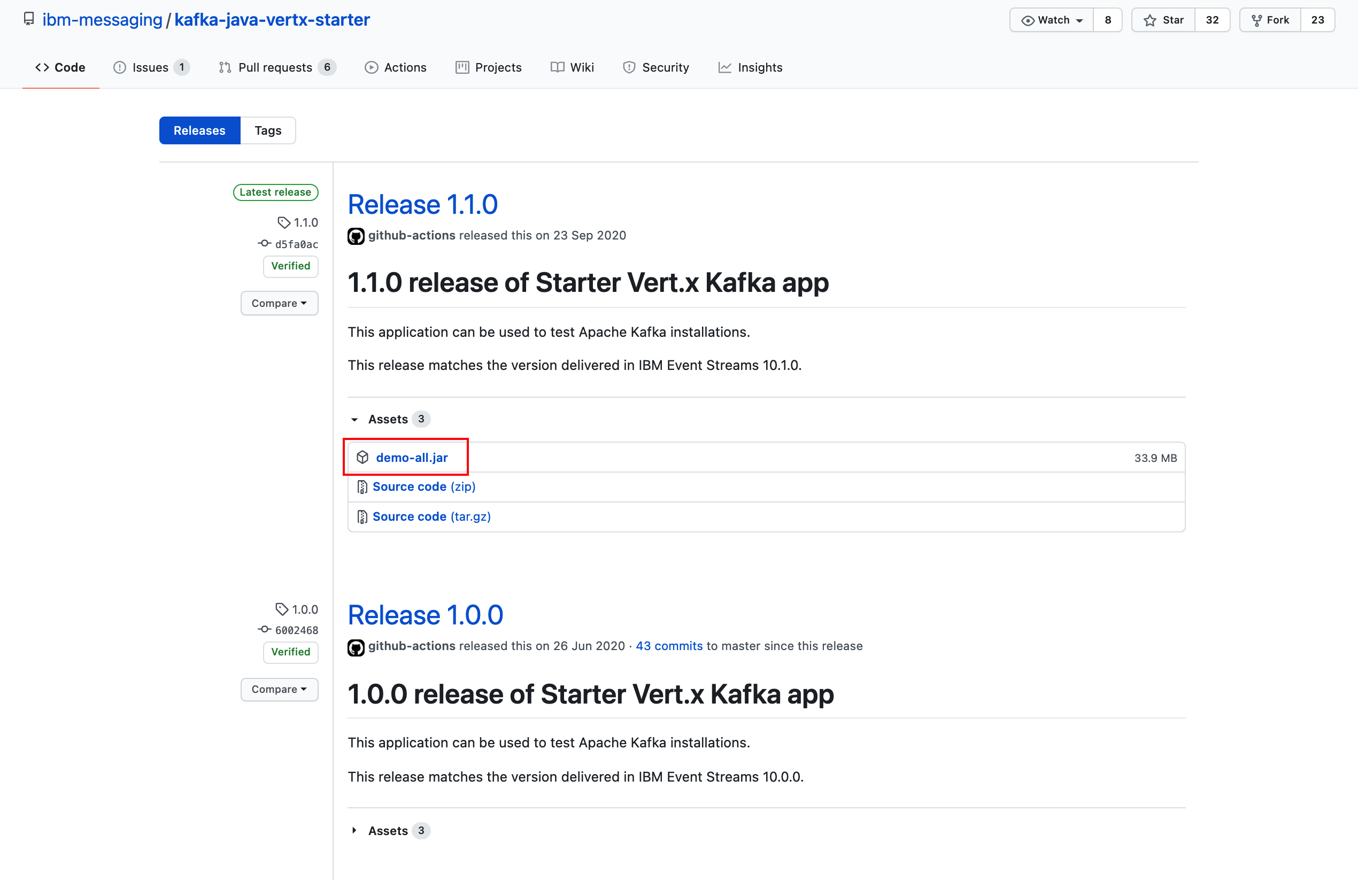

- Click Download JAR from GitHub. This will open a new window to https://github.com/ibm-messaging/kafka-java-vertx-starter/releases

- Click the link for

demo-all.jarfrom the latest release available. At the time of this writing, the latest version was1.0.0.

- Return to the

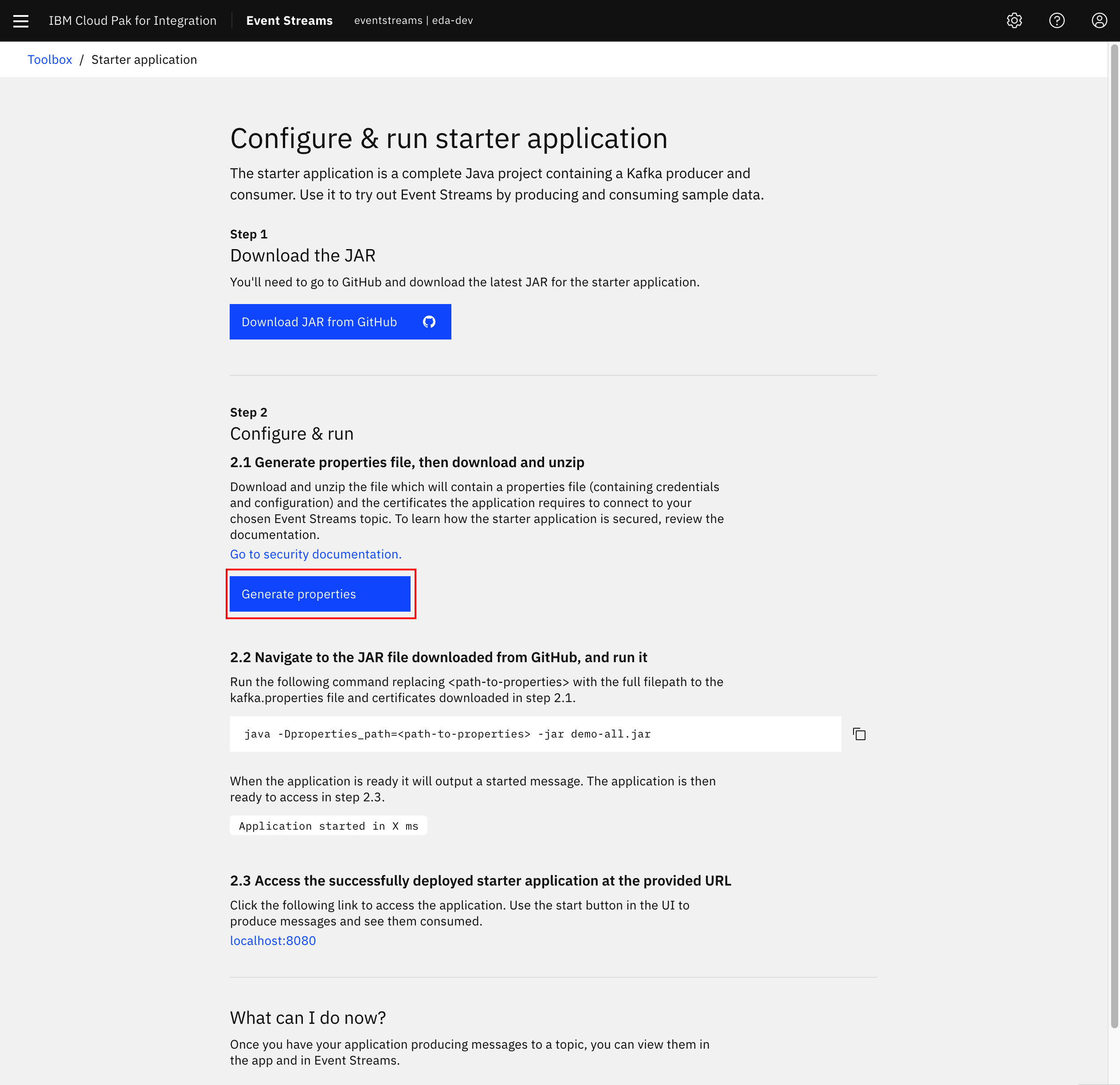

Configure & run starter applicationwindow and click Generate properties.

- In dialog that pops up from the right-hand side of the screen, enter the following information:

- Starter application name:

starter-app-[your-initials] - Leave New topic selected and enter a Topic name of

starter-app-[your-initials]. - Click Generate and download .zip

The figure above illustrates that you can download a zip file containing the properties of the application according to the Event-Streams cluster configuration, and a `p12` TLS certificate to be added to a local folder.

-

In a Terminal window, unzip the generated ZIP file from the previous window and move

demo-all.jarfile into the same folder. -

Review the extracted

kafka.propertiesto understand how Event Streams has generated credentials and configuration information for this sample application to connect.

Run starter application¶

-

This Starter application will run locally to the user's laptop with the command:

1. As an alternate method, we have packaged this app in a docker image:quay.io/ibmcase/es-demo -

Wait until you see the string

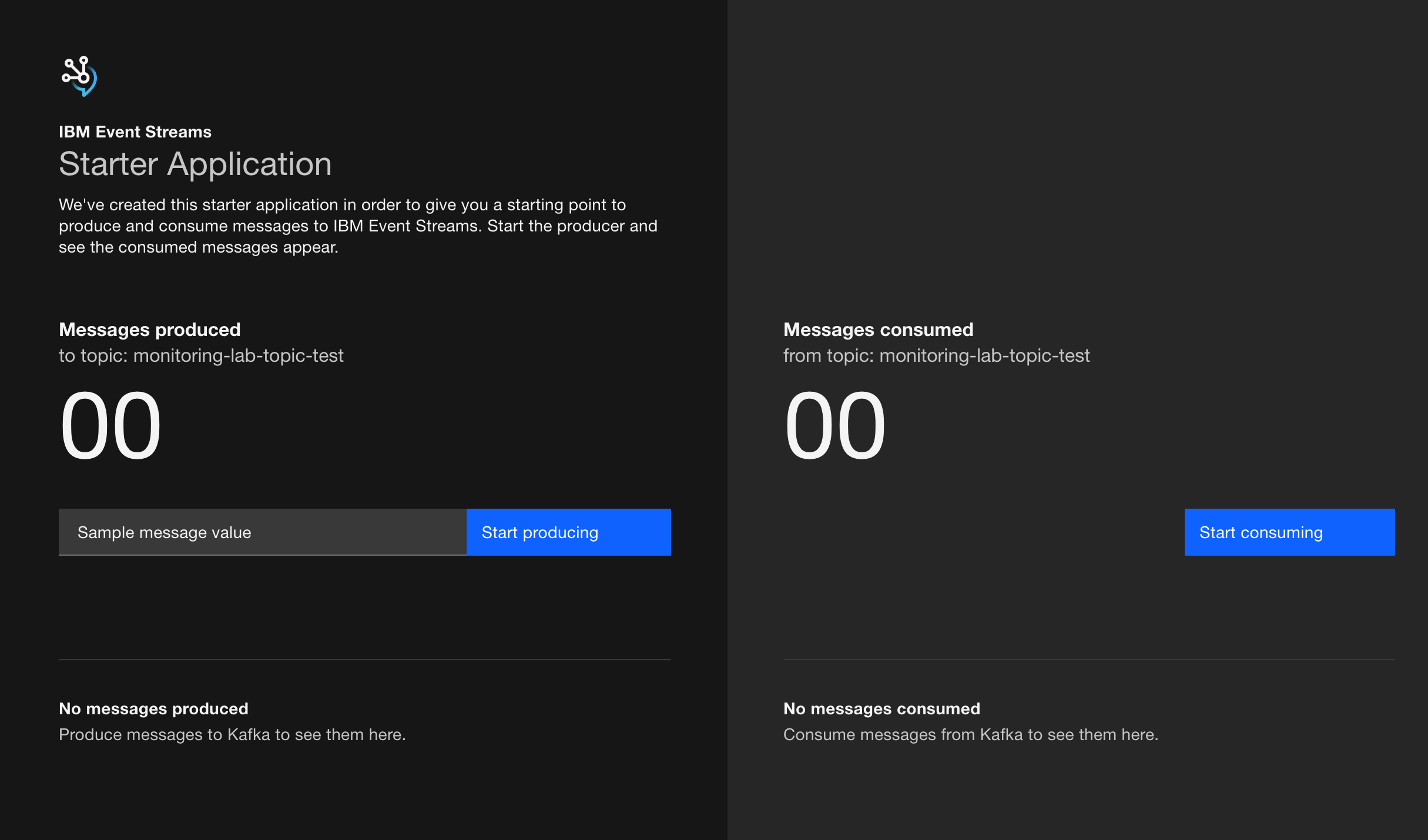

Application started in X msin the output and then visit the application's user interface viahttp://localhost:8080.

-

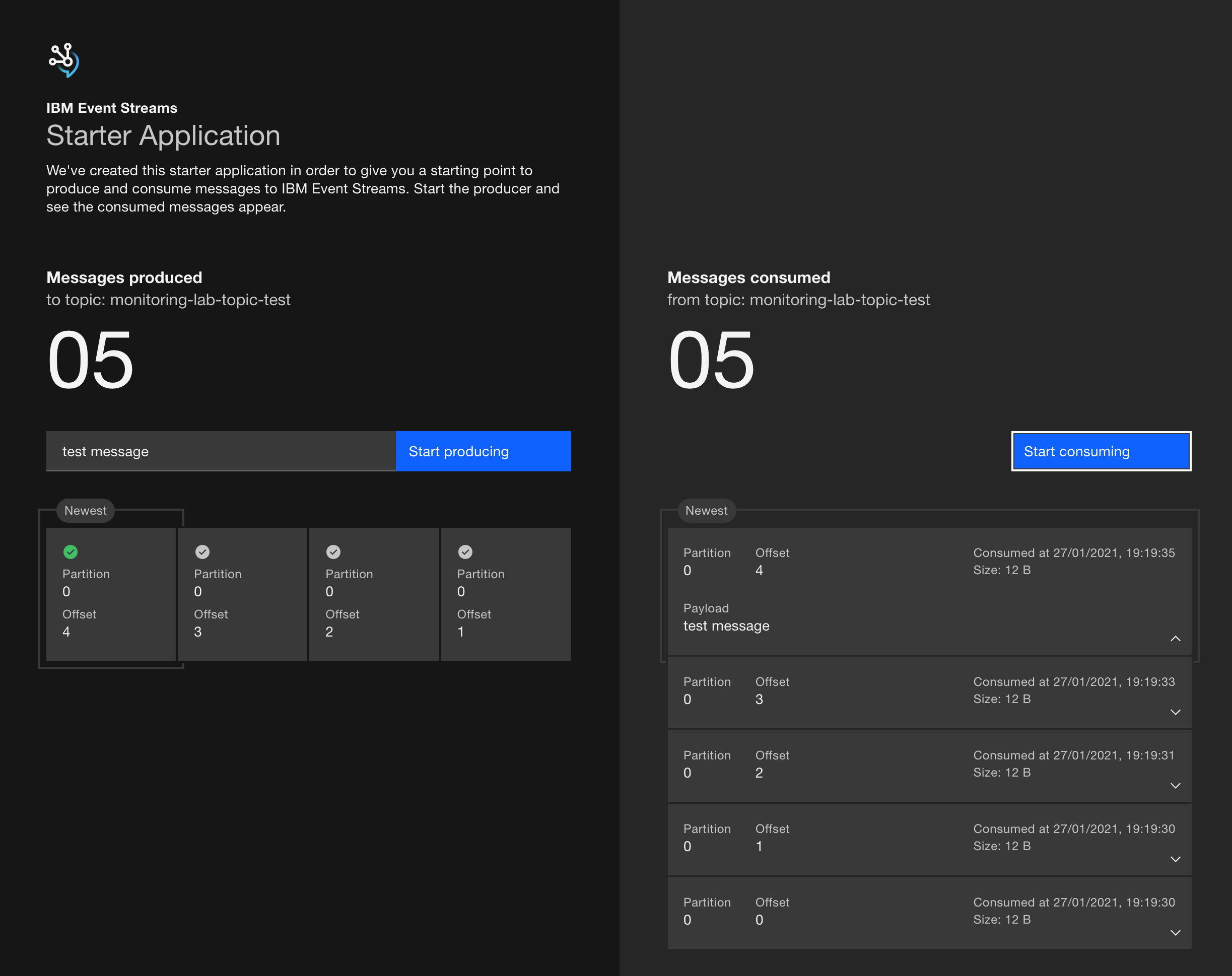

Once in the User Interface, enter a message to be contained for the Kafka record value then click Start producing.

-

Wait a few moments until the UI updates to show some of the confirmed produced messages and offsets, then click on Start consuming on the right side of the application.

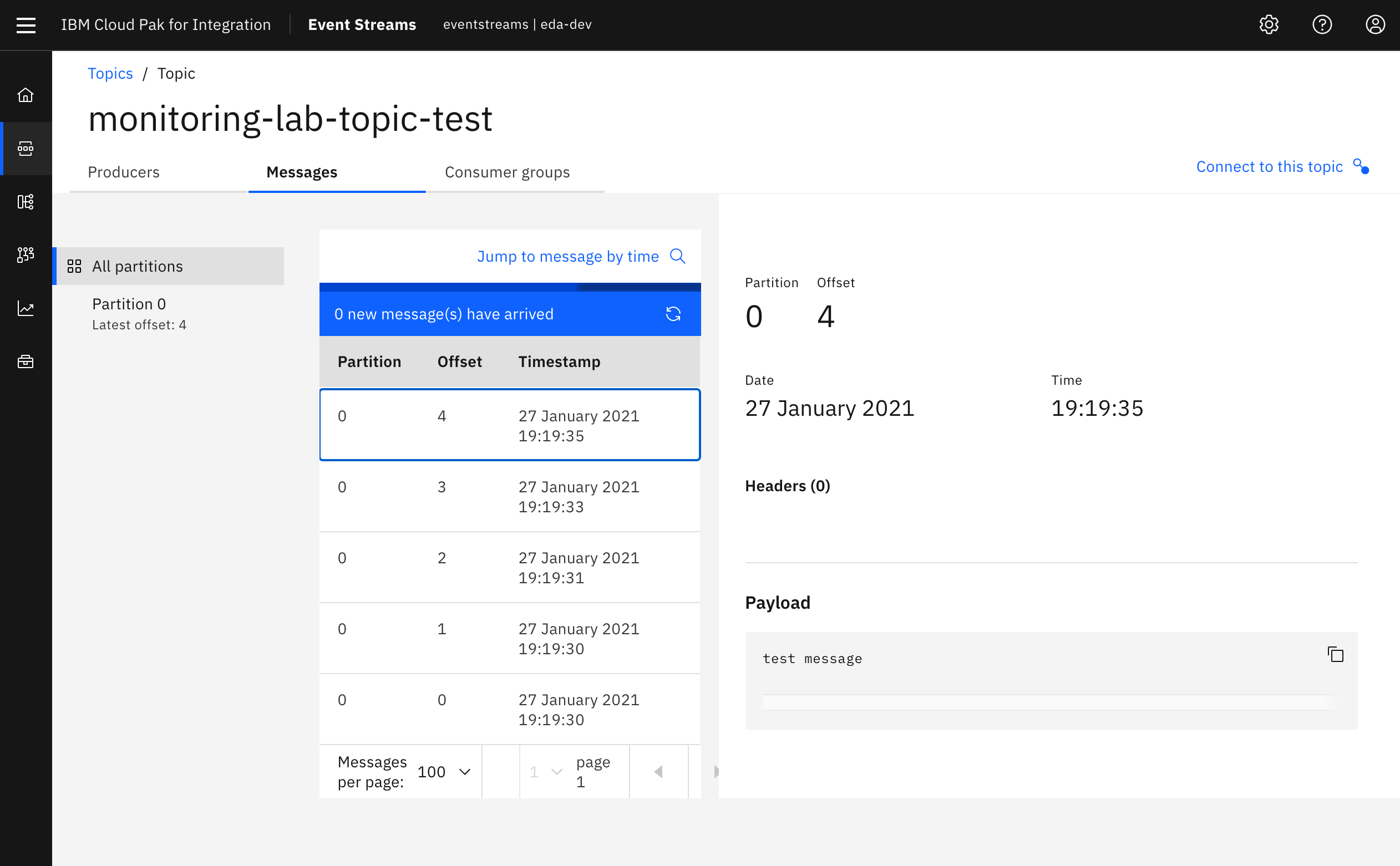

- In the IBM Event Streams user interface, go to the topic where you send the messages to and make sure messages have actually made it.

- You can leave the application running for the rest of the lab or you can do the following actions on the application

- If you would like to stop the application from producing, you can click Stop producing.

- If you would like to stop the application from consuming, you can click Stop consuming.

- If you would like to stop the application entirely, you can input

Control+Cin the Terminal session where the application is running.

An alternative sample application can be leveraged from the official documentation to generate higher amounts of load.

Deploy to OpenShift¶

This application can also be deployed to OpenShift. Here are the steps:

-

Use the same

kafka.propertiesandtruststore.p12files you have downloaded with the starter application to create two kubernetes secrets holding these files in your OpenShift cluster -

Clone the following GitHub repo that contains the Kubernetes artifacts that will run the starter application.

-

Change directory to where those Kubernetes artefacts are.

-

Deploy the Kubernetes artefacts.

-

Get the route to the starter application running on your OpenShift cluster.

-

Point your browser to that url to work with the IBM Event Streams Starter Application.

The source code for this application is in this git repo: ibm-messaging/kafka-java-vertx-starter.

Even though the application is running internally in OpenShift, it uses the external kafka listener as that is how the kafka.properties are provided by IBM Event Streams by default. In an attempt to not overcomplicate this task, it is used what IBM Event Streams provides out of the box.