Get started (101 content)

This chapter is to get you understanding what is Event-Driven architecture, what is Kafka, how to consider Messaging as a service as a foundation for event-driven solution, and getting started on IBM Event Streams and IBM MQ.

Important concepts around Event-driven solution and Event-driven architecture¶

First to get an agreement on the terms and definitions used in all this body of knowledge content, with a clear definitions for events, event streams, event backbone, event sources....

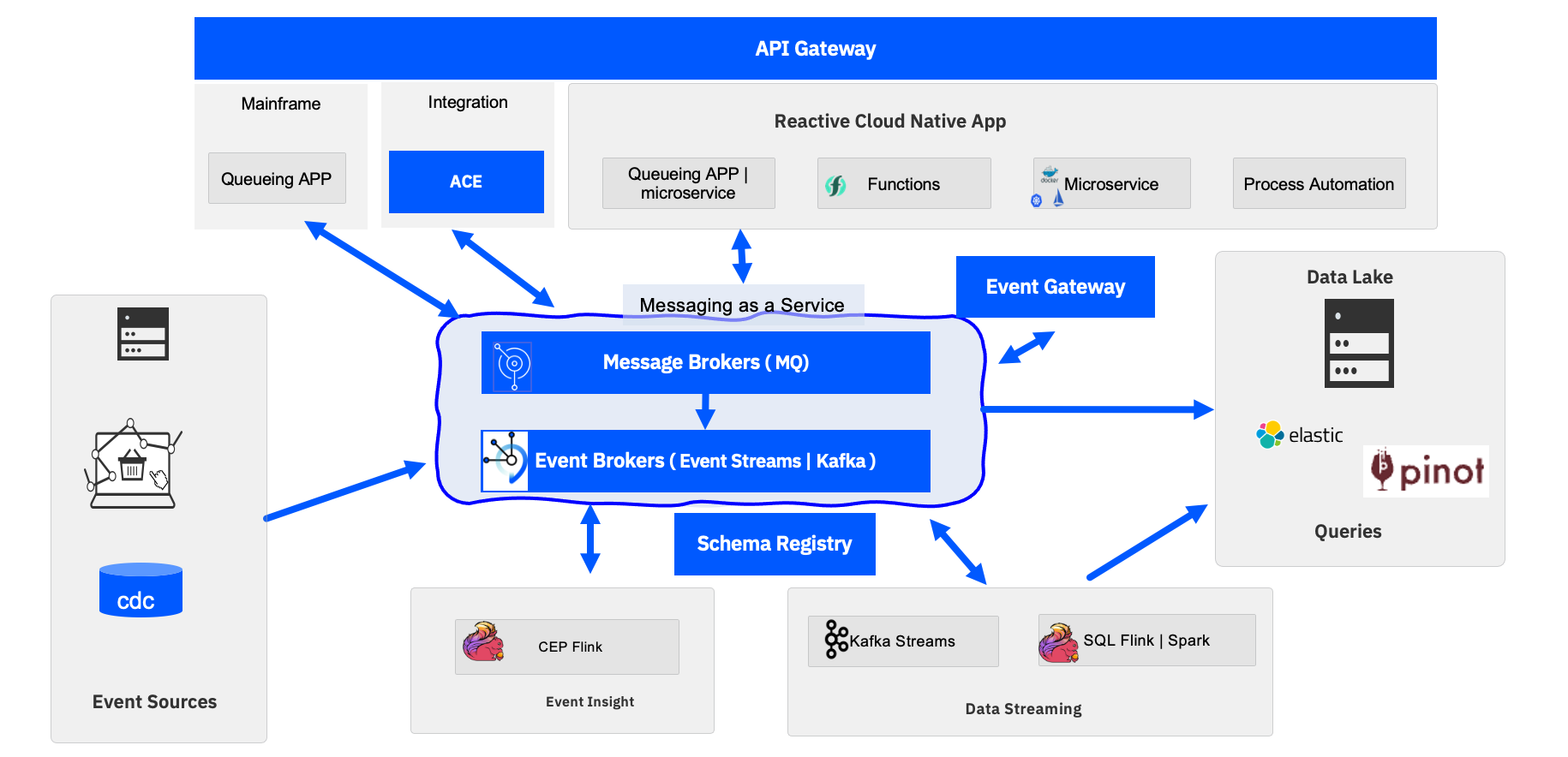

The main up-to-date reference architecture presentation is in this section with all the component descriptions.

This architecture is built after a lot of customer engagements, projects, and review, and it is driven to support the main technical most common use cases we observed for EDA adoption so far.

Understand Kafka technology¶

You may want to read from the [Kafka] documentation](https://kafka.apache.org/documentation/#intro_nutshell) to understand the main concepts, but we have also summarized those into this chapter and if you like quick video, here is a seven minutes review of what Kafka is:

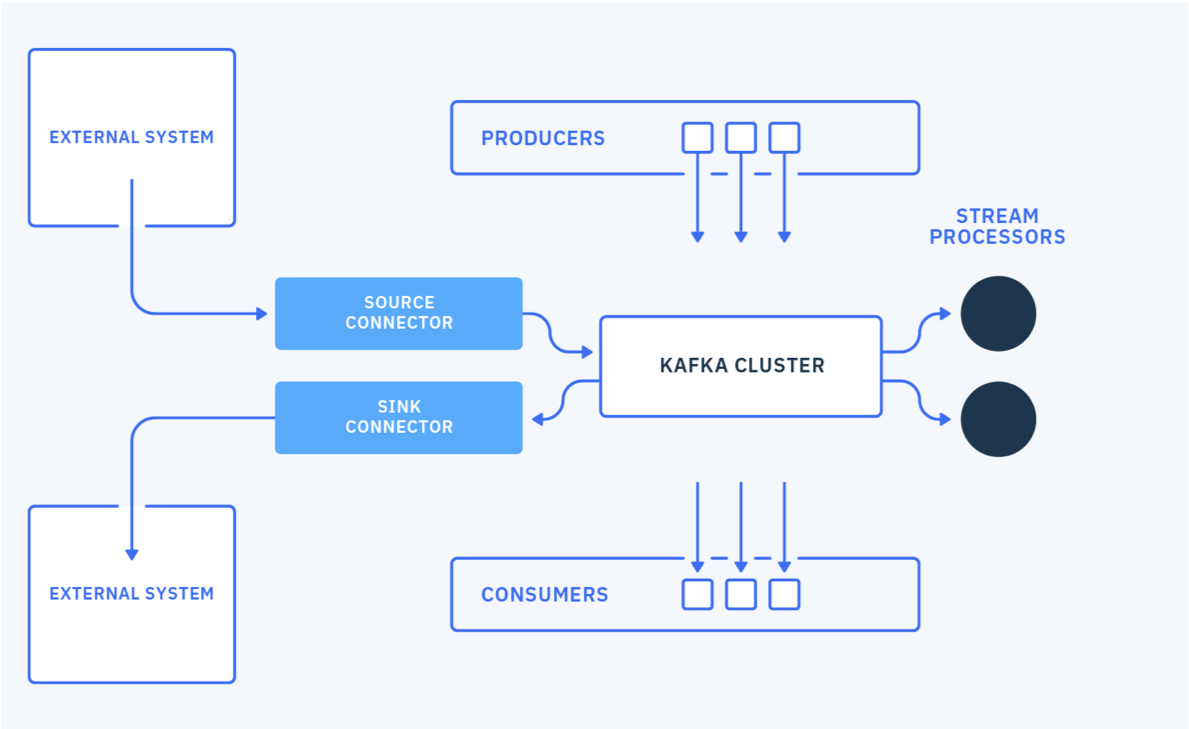

The major building blocks are summarized in this diagram:

To learn more about:

- Kafka Cluster see this 101 content

- For Producer introduction see this section

- For Consumer introduction see this section

- For Kafka Connector framework introduction

So what is IBM Event Streams?¶

Event Streams is the IBM packaging of Kafka for the Enterprise. It uses an award winner integrated user interface, kubernetes operator based on open source Strimzi, schema registry based on OpenSource and connectors. The phylosophie is to bring together Open Source leading products to support event streaming solution developments on kubernetes platform. Combined with event-end point management, no-code integration and IBM MQ, Event Streams brings Kafka to the enterprise.

It is available as a Managed Service or as part of Cloud Pak for integration. See this developer.ibm.com article about Event Streams on Cloud for a quick introduction.

The Event Streams product documentation as part of IBM Cloud Pak for Integration is here.

Runnable demonstrations¶

- You can install the product using the Operator Hub, IBM Catalog and the OpenShift console. This lab can help you get an environment up and running quickly.

- You can then run the Starter Application as explained in this tutorial.

- You may need to demonstrate how to deploy Event Streams with CLI, and this lab will teach you how to leverage our gitops repository

- To go deeper in GitOps adoption and discussion, this tutorial is using two public repositories to demonstrate how to do the automatic deployment of event streams and an event-driven solution.

- Run a simple Kafka Streams and Event Stream demo for real-time inventory using this demonstration scripts and GitOps repository.

Event streams resource requirements¶

See the detailed tables in the product documentation.

Event Streams environment sizing¶

A lot of information is available for sizing:

- Cloud Pak for integration system requirements

- Foundational service specifics

- Event Streams resource requirements

- Kafka has a sizing tool (eventsizer) that may be questionable but some people are using it.

But in general starts small and then increase the number of nodes over time.

Minimum for production is 5 brokers and 3 zookeepers.

Use Cases¶

We have different level of use cases in this repository:

The generic use cases which present the main drivers for EDA adoption are summarized here.

But architects need to search and identify a set of key requirements to be supported by thier own EDA:

- get visibility of the new data created by existing applications in near real-time to get better business insights and propose new business opportunities to the end-users / customers. This means adopting data streaming, and intelligent query solutions.

- integrate with existing transactional systems, and continue to do transaction processing while adopting eventual data consistency, characteristics of distributed systems. (Cloud-native serverless or microservices are distributed systems)

- move to asynchronous communication between serverless and microservices, so they become more responsive, resilient, and scalable (characteristics of ‘Reactive applications’).

- get loosely coupled but still understand the API contracts. API being synchronous (RES, SOAP) or async (queue and topic).

- get clear visibility of data governance, with cataloging applications to be able to continuously assess which apps securely access what, with what intent.

We have done some reference implementations to illustrate the major EDA design patterns:

| Scenario | Description | Link |

|---|---|---|

| Shipping fresh food over sea (external) | The EDA solution implementation using event driven microservices in different language, and demonstrating different design patterns. | EDA reference implementation solution |

| Vaccine delivery at scale (external) | An EDA and cross cloud pak solution | Vaccine delivery at scale |

| Real time anomaly detection (external) | Develop and apply machine learning predictive model on event streams | Refrigerator container anomaly detection solution |

Why microservices are becoming event-driven and why we should care?¶

This article explains why microservices are becoming event-driven and relates to some design patterns and potential microservice code structure.

Messaging as a service?¶

Yes EDA is not just Kafka, IBM MQ and Kafka should be part of any serious EDA deployment. The main reasons are explained in this fit for purpose section and in the messaging versus eventing presentation.

An introduction to MQ is summarized in this note in the 301 learning journey, developers need to get the MQ developer badge as it cover the basics for developing solution on top of MQ Broker.

Fit for purpose¶

We have a general fit for purpose document that can help you reviewing messaging versus eventing, MQ versus Kafka, but also Kafka Streams versus Apache Flink.

Getting Started With Event Streams¶

With IBM Cloud¶

The most easy way to start is to create one Event Streams on IBM Cloud service instance, and then connect a starter application. See this service at this URL.

Running on you laptop¶

As a developer, you may want to start quickly playing with a local Kafka Broker and MQ Broker on you own laptop. We have developed such compose files in this gitops project under the RunLocally folder.

# Kafka based on strimzi image and MQ container

docker-compose -f maas-compose.yaml up &

# Kafka only

docker-compose -f kafka-compose.yaml up &

Running on OpenShift¶

Finally if you have access to an OpenShift Cluster, version 4.7 +, you can deploy Event Streams as part of IBM Cloud Pak for Integration very easily using the OpenShift Admin console.

See this simple step by step tutorial here which covers how to deployment and configuration an Event Streams cluster using the OpenShift Admin Console or the oc CLI and our manifest from our EDA gitops catalog repository.

Show and tell¶

Once you have deployed a cluster, access the administration console and use the very good getting started application.

Automate everything with GitOps¶

If you want to adopt a pure GitOps approach we have demonstrated how to use ArgoCD (OpenShift GitOps) to deploy an Event Streams cluster and maintain it states in a simple real time inventory demonstration in this repository.

Methodology: getting started on a good path¶

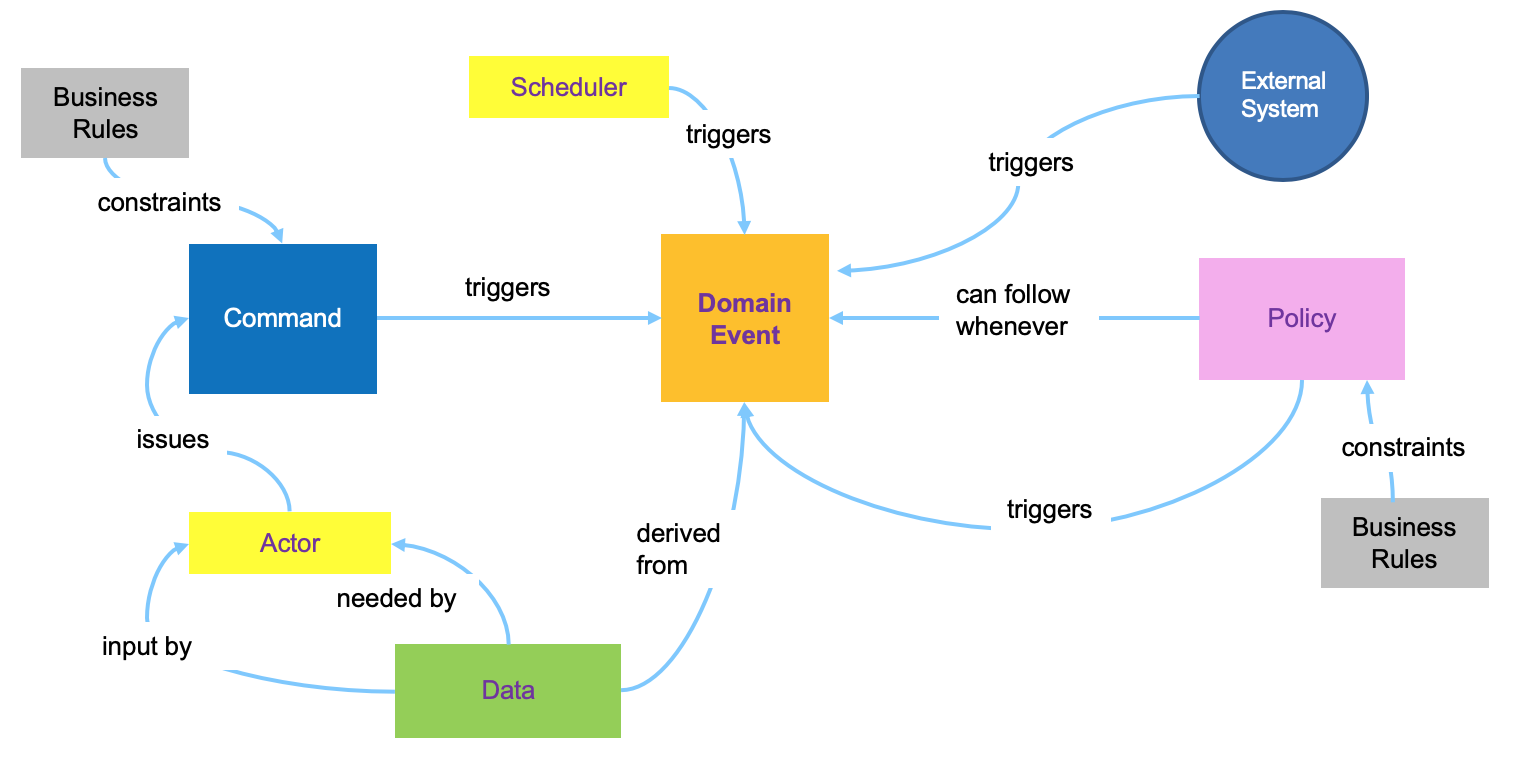

We have adopted the Event Storming workshop to discover the business process from an event point of view, and then apply domain-driven design practices to discover aggregate, business entities, commands, actors and bounded contexts. Bounded context helps to define microservice and business services scope. Below is a figure to illustrate the DDD elements used during those workshops:

Bounded context map define the solution. Using a lean startup approach, we focus on defining a minimum viable product, and preselect a set of services to implement.

We have detailed how we apply this methodology for a Vaccine delivery solution in this article which can help you understand how to use the method for your own project.

Frequently asked questions¶

A separate FAQ document groups the most common questions around Kafka.